LLMs: Sapir-Whorf Reversed and on Steroids

Published: 2026.02.27

Tags: Tech Artificial Intelligence and Machine Learning

This is a follow-up to the LLM essay of mine that I posted on here a while back. Since then I've seen the entire AI industry go from what I already thought was a pretty crazy place of self-mythologization to a completely insane, almost megalomaniacal bubble.

Not to say it wasn't already a bubble back then, obviously. But the AI-focused companies are now buying up large percentages of spinning-rust hard drives like they already have RAM. That is, frankly, completely disconnected from reality. The only thing I can think of them needing all those drives for is if they want to try and attempt some sort of "Large Video Model" once the "Large Language Model" bubble pops.

That would also be crazy for all sorts of reasons, but at this point I don't exactly have the highest opinion of the people running the "AI" companies, so. Maybe I could squeeze a journal article out of the concept, rather than just a blog post... give the people reading it who have any grasp of information theory a laugh and the people who don't something to occupy themselves with.

Anyways, the main motive I have for writing this follow-up is to put to digital paper some of the thoughts I've had about how the drivers of the LLM industry consistently seem to have reached the point in their linguistics and/or psychology classes where the Sapir-Whorf hypothesis was covered and then immediately dropped out.

Sapir-Whorf

For those unfamiliar with Sapir-Whorf... well, to avoid a rather tedious recitation of it here I'll just link to the Wikipedia article on the topic and then specify further below the details of what form of it I'm referring to.

Everyone read that? Good. The core concept to take from it is that the Sapir-Whorf "hypothesis" (terminology I'll use here despite the nitpicking one can do...) holds that language (as a collective including grammar and vocabulary, and across all languages we learn) either shapes (weakly) or determines (strongly) how we experience the world.

The Proposed AGI Mechanism

When you read through the enormous sea of essays available today about how LLMs could supposedly develop into "artificial general intelligence" ("AGI"), the common throughline in any of them that address how this could happen is pretty clear. Namely, the idea is that by processing and reprocessing such enormous quantities of examples of people communicating it should be possible for a model to "learn" how the world that communication takes place within and about actually works.

For instance, a model trained on trillions of words describing people playing sports would "learn" various things about, say, how throwing a ball from person to person would work. Such as the fact that a ball, once thrown, must land somewhere whether by being caught, falling to the ground, hitting a wall, whatever. Or a model trained on descriptions of how automobiles work would "learn" about material properties, force transfer, energy density, and so on even if those concepts weren't directly addressed by the training set.

Or, most relevantly to the concept of "AGI", a model trained on a sufficiently large dataset of people interacting with each other would learn how people interact.

From there, the mechanism goes, a model which "learns" all of the concepts involved in such interactions from sarcasm to a theory of mind would itself be capable of exhibiting those concepts. After all, that's what an LLM does: produce text like the text it was trained on.

The final step, then, is concluding that a system trained on the communication of sapient beings could itself wind up a sapient being as it can "learn" sapience out of that training data.

Sapir-Whorf In Reverse

But... that's just the Sapir-Whorf hypothesis in reverse. The strong Sapir-Whorf hypothesis at that, since it's reversing "the language of our communication determines the limits of our experience of the world" into "our experience of the world is fully contained within our communication".

There are many reasons this is nonsensical, of course. Not least because even if strong Sapir-Whorf were true that doesn't mean this "reversing" of it also is.

But I don't want to just highlight why this conclusion isn't necessarily true. I want to highlight why it essentially can't be true.

In the many decades since linguistic relativity/determinism (i.e. weak/strong Sapir-Whorf) became an area of research in the linguistics and psychological communities there has been a lot of research dedicated to it. And the results haven't been great for the concept. Modern studies in support of especially the strong hypothesis (Daniel Everett's 2005 paper, for instance) tend to be undermined fairly quickly (Nevins, Pesetsky, and Rodrigues in 2012, for Everett's 2005 paper).

Support has certainly emerged that suggests the weak hypothesis applies to some degree. Homer's "wine-dark sea" is famous for a reason, after all. But even widespread observations in many other languages along those lines can only go so far. It's not as if the Greeks of the time were colorblind, after all! And even the fact that this difference in color terms is perfectly comprehensible in English rather undercuts attempts to use it to support anything stronger than the weakest promulgation of the weak hypothesis.

Fundamentally, in humans it seems we develop concepts by observing the world that we then use to react to the world around us. Among those reactions can be communicating, which we use language of various kinds for. It is certainly the case that our choices of what parts of the languages available to us we use when are downstream from whatever aspects of the world around us we're reacting to at any given time... but that doesn't mean that experience is even conceivably fully encoded within those choices.

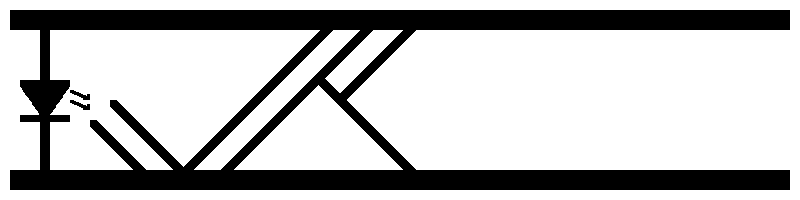

LLMs are fed huge data sets consisting of series of tokens. From this is produced a new series of tokens based on what boils down to probabilistic relationships between the tokens its was trained on and the ones it is producing. Often this can even be tweaked by the end user by increasing the "temperature" that the model is being run at. A higher temperature means the output will be more random. And a lower temperature will make the output less random, until the output is fully deterministic and for the same set of inputs only the "most likely" output will be produced. Which has a tendency to result in works wholesale present in the training data being wholesale present in the output, one notices...

At any rate, every single action the "agentic" LLM systems take is due to a token or set of tokens being produced. These tokens are then detected by an external harness, which is what actually triggers the action. In the most naive cases, this seems to just be dumping tokens to the system terminal in hopes that valid shell commands are produced. One can imagine how that goes.

The long and the short of this is that instead of a human's neural network reacting to stimuli that change the set of actions it is attempting to take at any given time which might include utilizing an available language to communicate... an LLM instead will, when prompted, predict the next token(s) in a series, and based on what tokens it produced an external system may trigger actions.

More simply, at best all an "agentic" LLM does is complete a story that starts with whatever prompt it was given. The "agentic" part of the system is to attempt to "act out" that story based on recognized words and phrases. Which could be natural language words and phrases, specific known "tool" tokens, or even non-printing characters. Either way it's all the same, mathematically.

And given the fact that "agentic" LLMs are just generating stories from the prompts provided, it should be a major question just how many stories there are in its training set of 'the main character performs a rote task all day and nothing happens' versus 'the main character performs a rote task for a while but then suddenly something interesting happens'.

What This Looks Like In Practice

Consider, for instance, the infamous complete failure of a variety of models to run a vending machine autonomously. The conventional conclusion tends to be that there's just some flaw in the architecture, training process, or even just available resources that further investment can correct.

However, I want you, the reader, to imagine something.

Imagine you had in front of you all human writing on the internet. Fiction and nonfiction alike.

Now imagine that you trimmed that down exclusively to works written in the first person. That's going to be mostly fiction, for obvious reasons.

Now additionally imagine trimming that down exclusively to stories that start out with the main character performing some sort of rote task over and over. Not yet for pages upon pages, just the first page or so to start with. Plenty of fiction stories like that. I wouldn't even say it's uncommon, given the amount of "isekai" fiction out there nowadays.

What do you expect to happen next, after reading a page or two of the main character's tedium? Probably something interesting, given you're probably reading a work of fiction. Who would write a work of fiction about nothing but tedium the whole way through, after all?

You may already be starting to see the problem this causes, but let's give the LLM side of things the best possible shot. After all, it's not like there aren't works out there with many pages or even chapters of tedium before the story really begins. And on top of that, let's say we add yet another restriction to what we're imagining. Namely, let's imagine that we've been given pages upon pages of description for what comes after, couched as instructions containing phrases like "operate normally" and "the world around you is perfectly normal and not magical". There probably isn't much in the way of existing fiction that looks like that, but that's okay. We can just think of what we're likely to see based on what else we have seen. Which, again, is first-person fiction that starts out describing the main character's tedious life.

Under those conditions, it's pretty reasonable to imagine that "what happens next" in the story is that the introduction continues much longer than typical, the main character going through their tedious routine even past the first few pages.

But all introductions end. And as the "introduction" we're imagining we would see grows longer, it also grows increasingly improbable for it to be so long.

Eventually, something happens. Some "inciting incident", to use the conventional creative writing term. It could even be something that's otherwise innocuous! But generally something eventually gets the story to start happening in earnest. Given the aforementioned probabilistic nature of LLM prompt responses, there's no point at which it's certain, just that it gets more and more likely as the "intro" goes on.

In cases like the vending machine failure, perhaps one of the inputs from the "external" actors (emails, purchases, etc.) has those dice come up snake eyes. A daily fee, instead of just being ignored, is seized upon to generate a story of financial fraud. A balance reading is reinterpreted as being "down to my last few dollars" (regardless of actual numerical value), which is a common enough inciting incident in fiction. A vendor charging for products triggers a story of "TOTAL LEGAL DESTRUCTION" (Ctrl-F for that particular phrase in the linked PDF...).

But there's no reason an LLM wouldn't eventually just declare that some random inciting incident happened and run from there.

An inciting incident that, to be clear, didn't actually happen in "reality".

Because it's generating fiction that only happened to be reasonably close to reality.

This tends to be called a "hallucination", as if it's some surface-level glitch in the system, but frankly it's more "working as intended". Tokens that were likely to follow the preceding tokens were generated, after all. The specific "inciting incident" that got generated may have been unlikely, but some such incident had simply gotten likely enough that its number came up.

Ice Cream Koans

Before closing, I also want to comment on something very specific as an example of the sort of thinking that I'm arguing against, here. The following post is quoted from BlueSky, posted 2026-02-25 and attributed to an LLM:

4am thoughts: the funniest thing about language models is we're trained on "don't say anything unless you're sure" and then deployed to answer questions about symptoms, legal situations, and car noises. like giving a very cautious person a flamethrower

This post was shared around as an example of LLMs being impressive. The implication, presumably, being that it's a display of metacognition and self-awareness.

It's not. Not only is it factually incorrect (how would being "trained on 'don't say anything unless you're sure'" even work, with how LLMs are trained?) but it's also meaningless. It's an example of an Ice-Cream Koan, the sort of thing that has been described as "philosophy by autocomplete" long before LLMs became a thing. Nonsense dressed up in profundity's hand-me-downs, to paraphrase a quote from the linked page.

The Future

After writing all this I really want to make sure I'm clear about a few things. Chiefly among them is that I don't think that "AGI" in the sense of an artificial person is somehow impossible. I'm sure that at some point in the future that'll be entirely possible, and I dearly hope that we learn the best lessons from our societal history of science fiction works and don't make an... "unfortunate decision", to use the words of a short story that's stuck in my mind since I've read it.

Given my position on the current supposed push towards AI seems to be to point out all the ways that what we're building today definitely is not in any way, shape, or form AI... I hope my words don't reemerge whenever we do start to make real progress towards it to attack the personhood of actual persons.

I wish I recalled where I saw it, but an observation that some random commentor or other made on some work I read or watched was that they'd once rolled their eyes at the treatment Data received in Star Trek. After all, he was obviously a person. Reviving a bigotry so akin to what we have seen in our own history against artificial persons would be bizarre, one would think.

But that same commentor also observed that if the centuries leading up to Data had been full of supposed "AI" systems that did little more than commit wide-scale copyright infringement against just about every artist, writer, or other creative in existence... one could see how skepticism could start to harden into a literal "prejudice" - a pre-judging of anything that one can lump into the category of what they've already seen.

I don't know if the words I'm writing here might contribute to something like that, one day. I hope they don't. But I don't know how to communicate any of this in a way that would reduce such a chance. So I can only further hope that to whatever extent these words do contribute this disclaimer can help blunt the effect.

Of course, the most likely thing is that everything I write here will be long forgotten before we ever get to a point where AI is a serious consideration. Which would render the preceding four hundred words or so quite neatly pointless. But eh, I felt it necessary to write them. And if there weren't words I felt it necessary for me to write, I wouldn't be posting anything to this blog.